Table of Contents

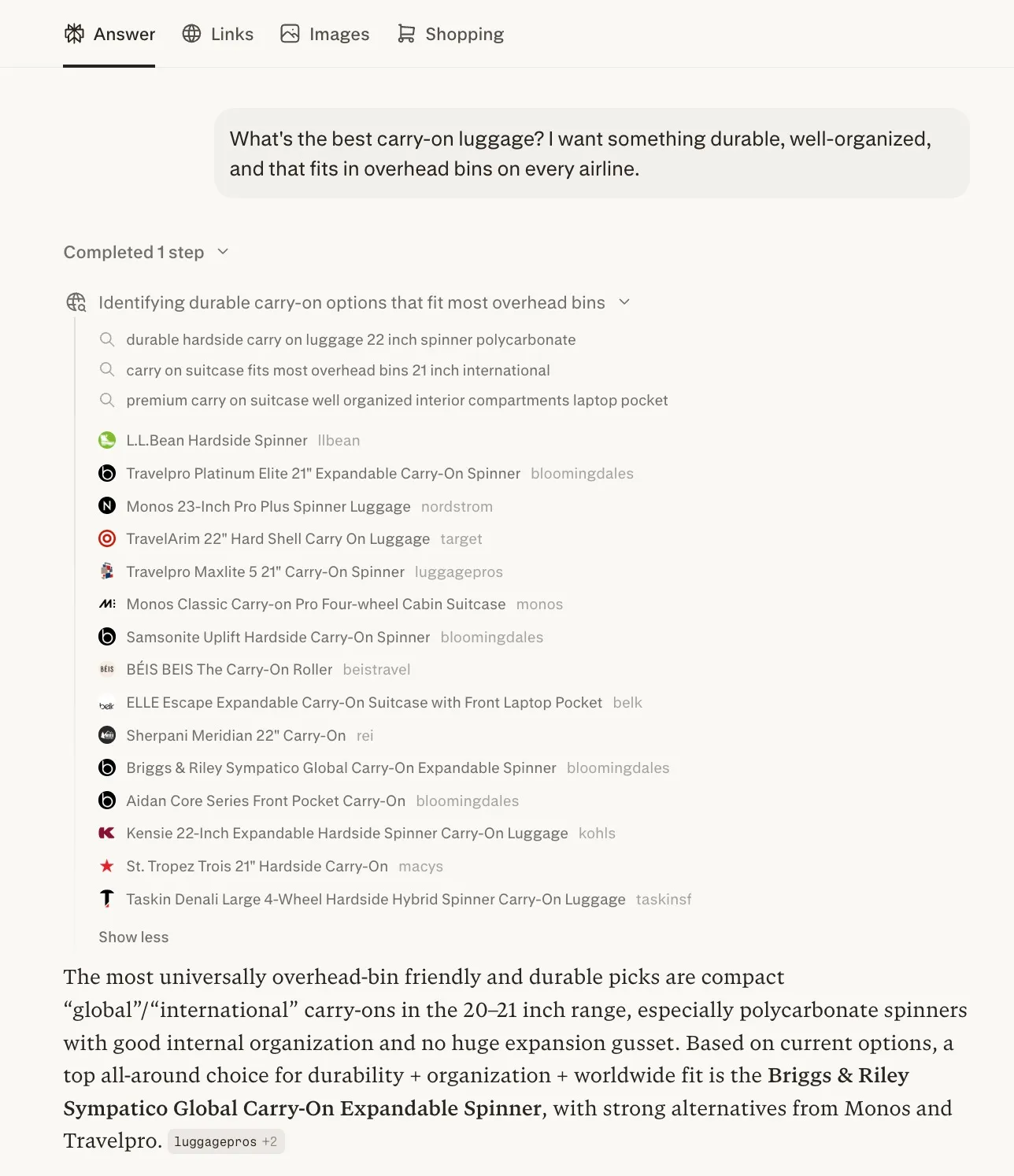

We asked Perplexity: “What’s the best carry-on luggage? I want something durable, well-organized, and that fits in overhead bins on every airline.”

Perplexity searched the web, found 15 products, and listed them. Eighth on that list: “BÉIS The Carry-On Roller” from Beis Travel. Perplexity found the product. It indexed the page. It knew the brand existed.

Then it wrote its answer: Based on current options, a top all‑around choice for durability + organization + worldwide fit is the Briggs & Riley Sympatico Global Carry‑On Expandable Spinner, with strong alternatives from Monos and Travelpro.

Beis didn’t make the recommendation.

This isn’t a glitch. It’s a pattern we’ve noticed, and that prompted us to run an experiment.

After running the same analysis across 25 ecommerce brands, three AI platforms, and 450 queries, we found it happening everywhere.

Here’s what we did.

The Experiment

We tested whether brands appear in the answers AI platforms generate when buyers ask purchase-intent questions. We did this for 25 DTC and ecommerce brands across 14 categories, queried through ChatGPT (GPT-4o), Claude (Sonnet), and Perplexity (Sonar) in a controlled, non-personalized environment so results weren’t influenced by history, location, or past behaviour.

We structured the test to mirror how buyers search:

-

Two buyer journeys per brand: one using core category language (how the brand describes itself), one using adjacent use-case language (how buyers describe their problem).

-

Three stages per journey: problem-aware (buyer describes a need), evaluation (buyer compares options), and shortlisting (buyer asks about the brand by name).

-

450 total outputs: each records whether the brand was recommended, mentioned, or absent. The result is a constraint matrix that maps where every brand is visible and where it disappears.

What Ghost Rankings Cost You

A ghost ranking doesn’t show up in your analytics. There’s no line item for “buyer who asked ChatGPT instead of Google and never found you.” No keyword ranking report flags it. No traffic chart drops because the traffic never existed in the first place.

That’s what makes it dangerous. The revenue loss is invisible, which means the urgency to fix it is zero until a competitor has already captured the position and revenue. But the data tells you what the cost looks like.

Ghost Rankings in Action

One luggage brand was ghost-ranked in ten of 18 cells across its constraint matrix.

In every one of those cells, the same competitor appeared. That’s not a missed impression. It’s an entire purchase journey where the buyer’s consideration set formed around a competitor, reinforced by three different AI platforms, at every stage from problem-aware to shortlisting.

| Problem-Aware | Evaluation | Shortlisting | |

|---|---|---|---|

| Claude | GhostAway, Rimowa | GhostAway, Samsonite | Rec'd |

| ChatGPT | GhostAway, Samsonite | GhostAway, Rimowa | Rec'd |

| Perplexity | GhostAway, Travelpro | GhostAway, Calpak | Rec'd |

| Problem-Aware | Evaluation | Shortlisting | |

|---|---|---|---|

| Claude | Not Found | GhostAway | Rec'd |

| ChatGPT | Not Found | GhostAway | Rec'd |

| Perplexity | Not Found | GhostAway | GhostAway, Calpak |

Attribution Can Wait

Your current SEO stack can’t see this. Ghost rankings live on a different surface entirely.

Analytics platforms measure traffic that arrived. They can’t show you the buyer who asked an AI for help, got a list of recommendations that didn’t include you, and bought from the brand that did. That’s an attribution problem.

Attribution will catch up: the tools are being built, tracking will improve, and eventually there will be a clean line between AI recommendation and purchase.

But the revenue being captured today isn’t waiting for the measurement to arrive. Buyers are already using these platforms. Consideration sets are already forming.

The brands that appear in those answers are already capturing the purchases that don’t show up in anyone’s “AI-referred” column yet.

The absence of attribution isn’t a reason to ignore this issue. It just means most brands haven’t noticed it yet.

Our Small Test Uncovered a Massive Issue

We tested 25 DTC and ecommerce brands across 14 categories (outdoor, footwear, home, beauty, travel, personal care, food and beverage, fitness, fashion, pets, kids, and cookware).

The results:

- Twenty of 25 brands (80%) were invisible at the problem-aware stage, when the buyer describes their need without naming a brand and the consideration set forms

- Ten of 25 brands (40%) were invisible for adjacent use-case queries even when they dominated their core category language

- Three of 25 brands (12%) had confirmed ghost rankings: their own content was cited as a source, but a competitor was recommended instead

- Eleven of 25 brands (44%) had an identifiable shadow competitor, the same one or two names filling every gap, across all platforms, every time the brand disappeared

- Only three of 25 brands showed zero AI visibility gaps across both journeys and all three platforms

Every one of these brands ranks on Google. Several rank on page one for their core category terms. Their Google visibility tells them everything is fine. Their AI visibility tells a different story.

The data reveals two distinct problems.

-

The first is AI invisibility: the brand simply doesn’t appear when buyers frame their search in a way the AI doesn’t associate with the brand. This is a common, fixable problem — but not the more dangerous one.

-

The second is what we call a ghost ranking: when the AI cites or draws on a brand’s own content to answer a buyer’s question, then recommends a competitor. The brand trained the AI. A competitor got the buyer. It’s not invisibility. It’s being used without being chosen.

Both patterns showed up across our 25 brands. They’re related problems with different causes and different fixes. The patterns below show where each one lives.

The Five AI Visibility Patterns

Not all AI visibility gaps look the same. Across our data, five distinct patterns emerged.

- Patterns 1 and 2 are invisibility problems: the brand doesn’t appear

- Patterns 3 through 5 are ghost rankings proper: the brand’s content is present in the AI’s knowledge, but the recommendation goes elsewhere

Some brands hit one pattern. Some hit four. The pattern determines the fix.

Pattern 1: Early Funnel Blackout

20 of 25 brands (80%) showed this pattern

The brand shows up when a buyer searches its name (branded search) or evaluates it directly. But when a buyer describes their problem without naming a brand (the way most purchase journeys actually start), the brand disappears.

Optimizing for non-branded search has been a cornerstone of our category-first SEO, and from what we’re seeing in AI search, this strategy will need to carry over to these new surfaces as well.

One Early Funnel Blackout from Our Research

Cotopaxi, a mid-sized outdoor apparel brand, was recommended by all three AI platforms when buyers asked about “best sustainable outdoor backpack.”

But when the query shifted to “what bag should I bring on a carry-on only trip” (the way a real traveler frames the question), the brand vanished at the evaluation stage across all three platforms. Osprey, Tortuga, and Away filled the gap.

The consideration set formed without them. By the time the buyer reaches the brand-comparison stage, they’re already comparing competitors.

| Problem-Aware | Evaluation | Shortlisting | |

|---|---|---|---|

| Claude | GhostPatagonia, Osprey | Rec'd | Rec'd |

| ChatGPT | GhostPatagonia, Osprey | Rec'd | Rec'd |

| Perplexity | GhostPatagonia, Osprey | GhostPatagonia | Rec'd |

| Problem-Aware | Evaluation | Shortlisting | |

|---|---|---|---|

| Claude | Not Found | GhostAway | Rec'd |

| ChatGPT | Not Found | GhostOsprey, Tortuga | Rec'd |

| Perplexity | Not Found | GhostAway, Topo Designs | Rec'd |

Pattern 2: Use-Case Gap

10 of 25 brands (40%) showed this pattern

The brand dominates its core category language but disappears when buyers frame the problem differently. The AI associates a brand with how the brand describes its own products, not with how buyers describe their problems.

One Use-Case Gap Example from Our Research

Allbirds was recommended across all three platforms for “best sustainable sneakers,” the exact language on their homepage.

When the query shifted to “most comfortable shoes for walking to work every day” (how a commuter actually searches), the brand vanished on Claude and Perplexity. New Balance, Brooks, and Hoka filled every slot.

The same product solves both problems. But the AI doesn’t know that.

| Problem-Aware | Evaluation | Shortlisting | |

|---|---|---|---|

| Claude | Rec'd | Rec'd | Rec'd |

| ChatGPT | Not Found | Rec'd | Rec'd |

| Perplexity | Rec'd | Rec'd | Rec'd |

| Problem-Aware | Evaluation | Shortlisting | |

|---|---|---|---|

| Claude | GhostNew Balance, Brooks | Rec'd | Rec'd |

| ChatGPT | Not Found | Rec'd | Rec'd |

| Perplexity | GhostNew Balance, Hoka | GhostNew Balance, ASICS | Rec'd |

Pattern 3: Shadow Competitor

11 of 25 brands (44%) had identifiable shadow competitors

When the brand disappears, the same competitor fills the gap every time. Not a random competitor or a rotating cast of them. The same one or two names appear across platforms, across queries, across journey stages.

What We Saw in Our Research

- For a luggage brand, Away appeared in ten of 18 cells. Every query where the brand was ghost-ranked, Away was the replacement.

- For a developmental toy brand, Hape filled seven of seven ghost-ranked cells.

- For a cookware brand, GreenPan appeared seven times.

- For outdoor apparel, Patagonia and Osprey appeared together in nearly every gap.

These shadow competitors aren’t necessarily outranking the brand on Google. They’re replacing the brand in the AI answer, which means they’re capturing the buyer’s consideration at the moment it forms.

Pattern 4: Citation Paradox

3 of 25 brands (12%) confirmed — likely underreported

The brand’s own content is cited as a source, but the recommendation goes to a competitor. The brand is training the AI to trust its expertise while getting zero brand lift from that trust.

When we queried Perplexity about the best weekender bag for travel, it cited six sources. Two were from Beis:

beistravel.com/products/the-weekender-in-blackbeistravel.com/pages/beis-weekender-reviews

Perplexity read both, used them as research material, but recommended Away and Calpak. The brand that wrote the content didn’t make the answer.

The same pattern appeared with Traeger. When we asked Perplexity about smoking brisket as a beginner, it cited traeger.com/recipes/beginners-brisket, the brand’s own beginner recipe guide, but recommended Weber. Traeger’s content trained the AI. Weber got the buyer.

We found a third instance with Casper, where the brand’s content was cited for a back pain query but no brand was recommended at all. The AI used the content as educational material without connecting it to a purchasing decision.

Note: This pattern likely underreports. It requires the AI to surface its sources, which only Perplexity does consistently. ChatGPT and Claude don’t show citations, so the same dynamic could be happening without leaving a receipt.

Pattern 5: Multi-turn Snowball

2 of 36 threads full snowball · 25 of 36 recovered only when buyer named the brand

The brand is excluded early in a multi-turn conversation and stays excluded. The AI builds context around competitors, and every follow-up reinforces the gap. The longer the conversation goes, the harder it becomes for the brand to enter the consideration set.

The full snowball, where exclusion at the problem-aware stage compounds through every follow-up, appeared in two of 36 multi-turn threads, both involving Beis on Perplexity.

Ghost-ranked at problem-aware, ghost-ranked at evaluation, ghost-ranked at shortlisting. Never recovered across the entire thread.

But the practical effect is wider: in 25 of 36 threads, brands only showed up once the buyer asked for them by name — at the stage where they already knew the brand existed. If the buyer never gets to that point, the brand never enters the consideration set at all.

The Control Cases

Three of the 25 brands (a home appliance brand, a pet supplies brand, and a recovery device brand) showed zero ghost rankings across both journeys and all three platforms. Six more showed only a single ghost-ranked cell out of 18.

What these clean brands had in common: dominant brand-to-category association.

When a buyer asks about cordless vacuums, the AI knows who makes them. When a buyer asks about pet food, the AI knows where to buy it. When a buyer asks about massage guns, the AI knows the category creator.

This matters because it means the diagnostic isn’t rigged. Ghost rankings aren’t inevitable. They’re a structural problem that strong category association solves.

The brands with clean results didn’t get lucky. They’ve built content-to-category authority that the AI can parse.

How to Find Your Ghost Rankings (Manually)

You can’t audit what you can’t see. Traditional rank tracking monitors Google positions. Ghost rankings live on a different surface entirely.

The diagnostic starts with three inputs:

- Your brand

- Your category

- Your top purchase-intent keywords

For each keyword, run the buyer query through ChatGPT, Claude, and Perplexity at three journey stages:

- Problem-aware

- Evaluation

- Shortlisting

What you’re looking for:

- Where does the brand appear? Recommended, mentioned, or absent?

- Where does it disappear? Which stage? Which platform? Which query framing?

- Who fills the gap? Is it the same competitor every time?

- Is your content cited without your brand being recommended? (Perplexity only; check the source footnotes)

The output is a constraint matrix: a grid that maps your visibility across every combination of journey, stage, and platform. The patterns in that grid tell you exactly where you’re losing buyers and who’s capturing them. That’s the foundation of our GEO Intelligence Report.

What This Means for Your Brand

Every brand in this study has a marketing team, most have an SEO program, and several most likely have agencies. Yet, I’m going to guess most of them don’t know this is happening. Not because their teams failed, but because the tools they use were built for a search surface that is no longer the only one.

The 25 brands in this study rank on Google. Their SEO dashboards show green. Their analytics track the traffic that arrives. None of those instruments measure the buyer who asked an AI instead and got an answer that didn’t include them.

That’s the gap. It’s not a content gap or a keyword gap. It’s a measurement gap. And the brands that close it first won’t just have the understanding to fix their AI visibility. They’ll be able to capture the consideration their competitors don’t know they’re losing yet.

The diagnostic is simpler than it sounds. Start with five queries. Pick your top purchase-intent keywords and run each one through ChatGPT, Claude, and Perplexity as a problem-aware search, the way a buyer who doesn’t know your brand would phrase it. See which brands are in the answer. See which aren’t. That’s your ghost ranking exposure in 20 minutes.

If what you find warrants a full audit, that’s what our GEO Intelligence Report is for. Here’s how we work with brands like yours.

The constraint matrix data referenced in this article was collected on March 23, 2026 across ChatGPT (GPT-4o), Claude (Sonnet), and Perplexity (Sonar). 25 brands, 14 categories, 450 cells. Single-turn prompt methodology: two buyer journeys × three stages × three LLMs per brand, plus citation follow-ups on Perplexity. Pattern 3 (Multiturn Snowball) data collected in a separate run: six brands, 36 multi-turn threads across three LLMs, conducted March 2026.